I built this website to share more about myself online and the side projects I’ve been working on; it also helps me keep track of my progress throughout my career.

Another objective of mine is to become a better writer and a better communicator, especially when having to communicate technical concepts in simple words.

Stack

Requirements #

The non-functional requirements for this website were easy to establish, especially after looking at several other personal websites:

- Quick to build

- Great performance

- Uncomplicated maintenance

- Cheap to run

- Simple to automate & deploy

A static site generator that generates a fully-static website is the perfect fit on this occasion. Static site generators reduce complexity by storing all content pages in Markdown

format. This means that the content can be tracked and managed by using version control software (like git), instead of requiring a database to store the content.

Because the site is static, no web servers, load balancers, or other hardware is needed. Instead, a content delivery network can be used to scale the traffic and serve it worldwide with amazing performance and cost savings.

There are many static site generators available today that are mature and ready for production. They include Jekyll, Gatsby, Hugo, Next.js, and Eleventy, just to mention a few.

Hugo #

I chose Hugo for this small project because it was recommended to me by a friend.

Maintainability is straightforward with Hugo; working with a couple of markdown files is enough to update or add new content to the website.

Performance is unbeatable when it comes to hosting a static website on the cloud today; all generated files are either HTML or other types of assets (images, web fonts, CSS, JS), and putting everything behind a CDN such as CloudFront makes everything load instantly regardless of the user’s location.

This also gives another advantage in terms of cost performance and savings. Hosting this Hugo static website costs almost nothing, because of the massive economies at scale of AWS and the consumption model.

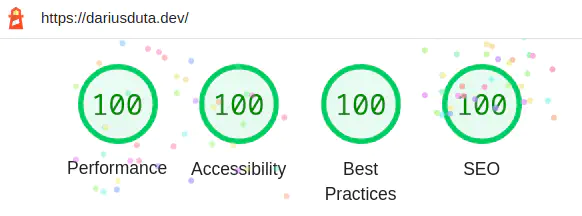

With minimal effort put into configuring CloudFront’s caching behaviour, I was able to achieve the maximum score on Google’s Lighthouse test on both desktop & mobile categories.

Infrastructure #

The AWS resources are provisioned through AWS Cloud Development Kit (CDK) , which is a new framework built by AWS and released in 2019. CDK is awesome because it allows us to use programming languages to define AWS infrastructure and the output of it is just a CloudFormation template.

In my opinion, CDK makes authoring CloudFormation templates much more straightforward, because it allows you to make use of the typing system of a programming language, in my case TypeScript. This saves me a lot of time, since I don’t have to consult the online documentation for the CloudFormation resources I need to provision.

CDK example #

The following CDK code is enough to request a new ACM certificate for a domain and automatically validate its ownership through the Route53 DNS service.

The resulting certificate’s ARN is stored in SSM Parameter Store, so it can be used by stacks that deploy in different AWS Regions.

Those ~20 lines of CDK synthesize into a CloudFormation template that contains over 100 lines of YAML:

export class AcmStack extends cdk.Stack {

public readonly acmParam: ssm.StringParameter;

constructor(scope: Construct, id: string, props: StackProps) {

super(scope, id, props);

const acmCert = new cert.DnsValidatedCertificate(

this,

"InfraCertificate", {

domainName: props.domainName,

hostedZone: props.hostedZone,

region: "us-east-1",

});

this.acmParam = new ssm.StringParameter(

this,

"AcmCertificateParameter", {

parameterName: props.acmCertParameterName,

stringValue: `${acmCert.certificateArn}`,

});

}

}

The DnsValidatedCertificate construct deploys a separate Lambda function that will begin the process of requesting a new TLS certificate from AWS ACM.

The Lambda function will add the required DNS records into the provided Route53 Hosted Zone. Then, the function will keep checking every 30 seconds whether the DNS validation has succedeed, and only then signal the completion of the resource.

CodePipeline #

Connecting to GitHub #

There are two ways of connecting CodePipeline to GitHub; the first one involves the AWS console, in which you have to complete the OAuth flow and sign into GitHub, so that you can grant the AWS Connector for GitHub access to your GitHub repositories.

The second method involves using the CDK construct GitHubSourceAction (docs)

.

For that to work, a GitHub personal access token

must be generated and stored within a secret in AWS Secrets Manager. This access token is then supplied to GitHubSourceAction as the oauthToken.

I chose to use a GitHub access token, because it can be automated more easily and involves no further modifications to the CDK code.

In addition to that, a Lambda function can be used to automate the refresh of the GitHub access token and store its new value back into Secrets Manager.

CI/CD Pipeline #

This was the first time I used CDK to automate a CodePipeline. For static site generators, the deployment process is simple and is usually more or less made of these 3 stages:

- Source stage

- Runs on every new commit on a specific

gitbranch - Code is downloaded from the GitHub repository

- Runs on every new commit on a specific

- Build stage

- Uses CodeBuild and following the instructions from

buildspec.yml, calls Hugo to generate the files - Uploads the generated HTML files to the target S3 Bucket

- Uses CodeBuild and following the instructions from

- Deploy stage

- Calls a custom Lambda function that invalidates the CloudFront cache

- After the invalidation, CloudFront will request & cache the newly-uploaded files from S3

Conclusion #

For my use case, I think Hugo is a good choice. The total cost, including the domain, works out at around $2 per month; for that, I get reliable and performant cloud hosting through Amazon CloudFront and the AWS network.

There is very little maintenance required; the TLS certificate renews itself automatically through AWS ACM, content is kept in Markdown files and CodePipeline deploys every time updates are committed to GitHub.

A good setup for the years to come. 😄